Introduction

There has been lot of discussion about compiling GNU/Linux from scratch, and performance boost related to it. I have never been a big believer of this – except for applications such as multimedia encoding. Many GNU/Linux distributions come with executables, that can be run on virtually any i386-compatible processor out there, but which are optimised for certain CPU. Some distributions also come with executables that ignore compatibility with old processors, and assume Pentium 3 as oldest supported architecture for instance. How much does it make difference, really?

Attention! Sophisticated scientific methods and highly precise measurements ahead. You have been warned!

Test setup and environment

My test environment isn’t the best possible; I’m running AMD Athlon 64 X2

(cpuinfo) running at 2400 MHz (i.e. 4600+) with 2 GiB of

dual-channel memory. My Debian GNU/Linux (unstable) installation is running in

pure 64-bit. 32-bit executables are run in 32-bit chroot, also from Debian

unstable. CPU clock frequency governor is in use, but minimum frequency is set

manually to 2400 MHz. Thanks to two cores, combined tests are rather

irrelevant, but included nevertheless.

Tests and measurements

I decoded and encoded approximately 48 minutes of CD-quality stereo audio with

the latest oggdec and oggenc. Unfortunately I have read the data to

decode/encode from disk instead of RAM-disk, but hopefully using

/usr/bin/time have negated the effects. For reference, reading the

uncompressed wave file, reported by /usr/bin/time, took “0.01user 0.21system”

in worst case run. Sample I have used is full dump of Christopher Franke’s

album Klemania.

I have recompiled not only vorbis-tools, but also libc6, libogg0, libvorbis0a,

and libvorbisenc2. The sources and default compiler options are taken from

Debian unstable repository, ftp.fi.debian.org, at around 21 August 2007,

22:00:00 UTC+3. For optimised versions, switches -O3 -fomit-frame-pointer

-march=athlon-xp and -O3 -fomit-frame-pointer -march=athlon64 are added for

32-bit and 64-bit executables, respectively. Self-compiled libraries are then

loaded with LD_PRELOAD, except for ld-linux.so/ld-linux-x86-64.so and

linux-gate.so.1. For reasons unknown to me, preloading ld-linux would cause

segfault upon running any executable.

Finally, the tests were run with run.sh overnight, manually interrupted in

the morning after completing 24 full rounds.

Results

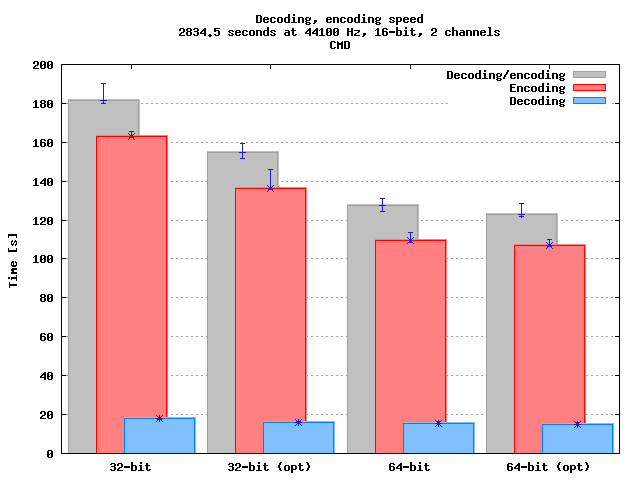

Processing time

| Task | Executable | Average [s] | Median [s] | sdev [s^2] | Minimum [s] | Maximum [s] |

|---|---|---|---|---|---|---|

| Encoding | 32-bit | 163.61 | 162.83 | 0.7539 | 162.43 | 165.47 |

| 32-bit opt | 137.52 | 136.25 | 2.4017 | 135.93 | 145.84 | |

| 64-bit | 109.80 | 109.47 | 1.1295 | 108.64 | 113.49 | |

| 64-bit opt | 107.47 | 107.17 | 0.5934 | 106.56 | 109.90 | |

| Decoding | 32-bit | 17.81 | 17.84 | 0.0761 | 17.69 | 18.02 |

| 32-bit opt | 16.12 | 16.04 | 0.1311 | 16.01 | 16.62 | |

| 64-bit | 15.50 | 15.42 | 0.2577 | 15.33 | 16.53 | |

| 64-bit opt | 15.15 | 15.06 | 0.1989 | 15.00 | 16.08 | |

| Combined | 32-bit | 182.74 | 181.53 | 2.1005 | 180.05 | 190.35 |

| 32-bit opt | 154.27 | 154.58 | 2.0737 | 151.69 | 159.60 | |

| 64-bit | 126.39 | 127.39 | 1.6426 | 124.30 | 130.94 | |

| 64-bit opt | 123.38 | 122.69 | 1.3600 | 121.85 | 128.79 |

Impact

| Task | Arch | Unoptimised [s] | Optimized [s] | Difference [s] | Difference |

|---|---|---|---|---|---|

| Encoding | 32-bit | 163.61 | 137.52 | 26.10 | -0.159 |

| 64-bit | 109.80 | 107.47 | 2.33 | -0.0213 | |

| Decoding | 32-bit | 17.81 | 16.12 | 1.69 | -0.0950 |

| 64-bit | 15.50 | 15.16 | 0.35 | -0.0223 | |

| Combined | 32-bit | 182.74 | 154.27 | 28.46 | -0.156 |

| 64-bit | 126.39 | 123.38 | 3.02 | -0.0239 |

Other results

Before running these “final tests” I did some initial testing by running the same scripts on shorter samples with only vorbis-tools optimised. With my infinite wisdom I already deleted the raw logfiles, so I cannot present any fancy figures or render pretty graphs. Fortunately what I did not delete, are the old graphs. They’re ugly, but carry most of the information the new ones do.

Unexpectedly, encoding actually takes longer! However it is clear that since most multimedia applications use libraries to do the work, just optimising the executable won’t help. It can actually make things worse.

Difference between 64-bit and 32-bit executables

While I wasn’t exactly looking to compare 64-bit and 32-bit executables, it seems that encoding Vorbis benefits from those extra bits and CPU registers. Encoding gains 30% performance boost, decoding 10%. This is unlikely to have huge impact on general usability of your computer, but again, when working with heavy multimedia contents, switching from 32-bits to 64-bits can reduce the risk of dying for old age while waiting for audio compressor to finish its task.

Analysis

Figures for 32-bit files clearly show that there is difference between 32-bit

stock Debian files and gcc-optimised files. If you start optimising, you’ll

have to go all the way – just optimising the executables won’t do the trick.

However there is no significant difference between unoptimised and optimised

64-bit files. Also, it is unlikely switches other than -march (or -mcpu and

friends) make much difference. Even Gentoo Compilation Optimization

Guide

acknowledges that gcc

manual

says so.

Since Debian thrives on compatibility, all 32-bit i386-compatible binaries are indeed compiled for i386, and not for i686. If I remember correctly, Mandrake, for instance, has been optimised for i686 or i586. Multimedia applications like audio encoding can take advantage of instructions unavailable on earlier CPUs, and as such optimising for i686 can indeed make difference. This is probably why there is so clear difference between optimised 32-bit files but next to no difference between 64-bit files. x86_64 files can take full advantage of x86_64’s instruction set as there are no legacy processors that need to be worried about. Unfortunately, in future this can change.

In case of Vorbis decoding/encoding, performance boost is 10–16% and 2% for 32-bit and 64-bit, respectively. I think it is fairly safe to say that compiling for x86_64 to get performance boost just isn’t worth it. On the other hand, if you’re working with heavy multimedia contents, and need to decode and encode large chunks of data often, optimisation does matter for 32-bit environments.

While optimised 32-bit executables perform better on multimedia content, how much difference does optimisation have on general tasks? Will you notice difference in web browsing, starting applications, or task switching? Is this difference significant, noticeable, or even relevant?

First of all, I think we can dismiss the impact optimisation has on listening to music. Decoding 48 minutes of Vorbis encoded music takes just 16 seconds on my computer. That is well below 1% of CPU load for real-time decoding, and hardly significant. Second, usually the user is the slowest part of computerised task. Computer usually responds to user faster than user responds to computer. Personally I’d consider anything less that 25% to be insignificant, but that is, of course, an open subject.

Conclusions

Optimisation on x86_64 has virtually no effect on performance. Processing multimedia content may speed up a little, but the difference is only about 2%. Simply spending a little more money on the hardware would improve the performance much more.

For 32-bit environments the answer is not as straightforward. There is clear difference between unoptimised files for i386 and optimised files for athlon-xp. Encoding Vorbis files gains 10–16% in encoding speed when optimised for the CPU. However in other tasks such as web browsing, text editing, and listening to music, multimedia enternsions for the CPUs instruction set are not that useful. Besides of less room for optimisation, the user also reacts slowly.

Some people claim that compiling everything by hand makes their computers run faster overall, from logging in time to web browsing and even running Bash. My claim is that none of these benefit from CPU-specific optimisations so much that the difference would be noticeable. I claim that what matters the most is the hardware – or number of bytes that need to be loaded from disk. Pre-built GNU/Linux distributions often come with everything user can imagine. Applications have been compiled against libraries the user will never need. Libraries may come with debug symbols. People who compile everything themself, often disable every feature they think they are not going to miss, thus reducing library dependencies.

In short, optimising for x86_64 is waste of time for time being. Optimizing for i686 instead of i386 may speed up processing multimedia content, but ordinary user never notices any difference.